| S No | Use Cases |

Expected behaviour |

Design Choices | |

|

1 |

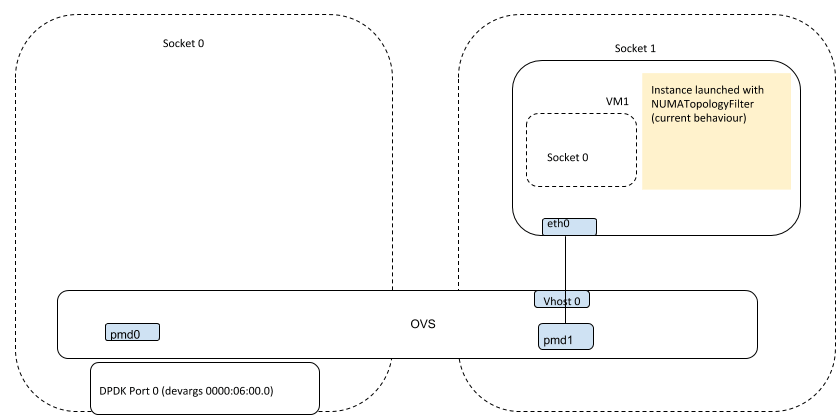

NUMA locality to PCI devices | In a dual numa node setup,with dpdk nic attached to numa node1, vm |

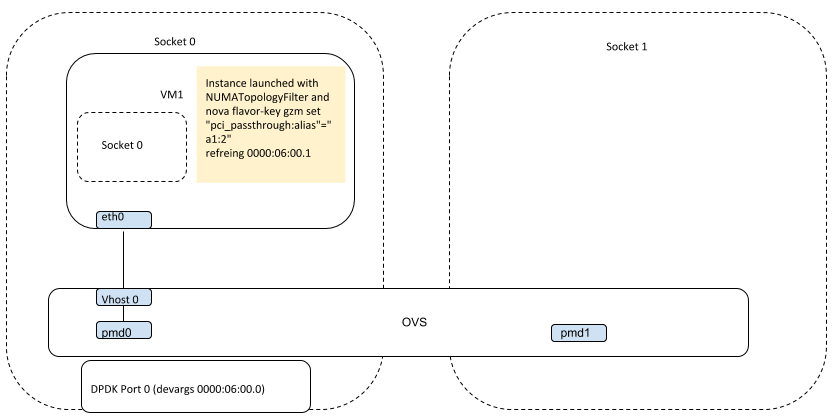

TODO:

1. Need to explore if pci numa awareness logic (added by patch https://review.openstack.org/#/c/140290/) with pci_passthrough:alias, could be used to schedule VM on the numa node associated with ovs dpdk port (using pci address, vendor id of dpdk nic) in non pci/sriov passthrough scenario 2. If not, identify a possible way to enhance the patch (numa_topology_filter) with minor tweaking. |

NUMA locality to PCI device

|

|

2 |

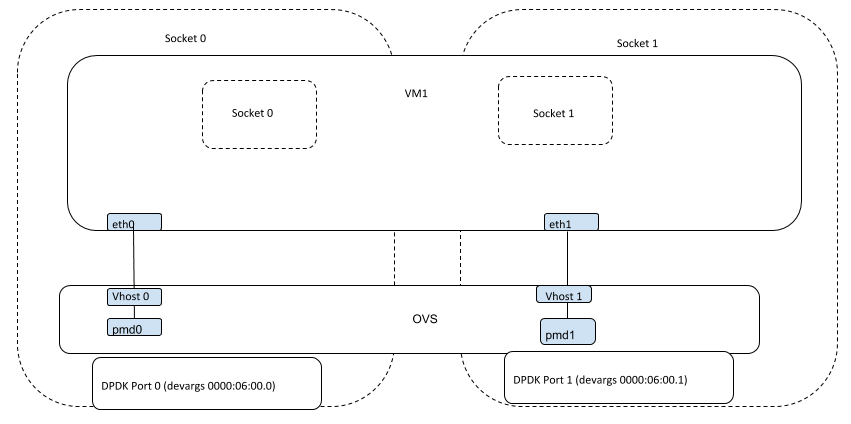

Cross Numa | In a dual numa node setup,with dpdk nic attached to numa node1, vm |

since scheduler automatically maps VM to free numa nodes, we do not have to worry about numa nodes being unused. | Cross NUMA

|

|

3 |

Pinning to specific numa node without pci reference | Not yet supported, in openstack | ||

|

4 |

multi node numa with dpdk pci devices in those nodes | In a dual numa node host setup,with two dpdk nics, each attached to different numa nodes(dpdk0 on numa node0 and dpdk1 on numa node1). A multi numa node guest spwaned with two VIF, should have the guest NUMA nodes along with the VIF be directly associated with host NUMA nodes corresponding to dpdk nics. | TODO:

NUMA / PCI device association feature in qemu/libvirt [1] need to be added to openstack for this to work. [1] |

Multi node NUMA with dpdk pci devices in those nodes

|

|

5 |

pci passthrough – NUMA locality to PCI devices | VM with pci device is spawned on host numa node associated with the pci device (with nic, dpdk0 on host numa node0, vm would be spawned on node0) | Current behaviour is nova will boot a VM with PCI devices from the NUMA node associated with the PCI device | |

|

6 |

pci passthrough – cross numa | https://bugzilla.redhat.com/show_bug.cgi?id=1366208 | TODO:

It will be resolved by BP [1]. Nova-scheduler will choose a compute host without any consideration for the NUMA affinity of PCI devices. However, nova-compute will attempt a best effort selection of PCI devices based on NUMA affinity. It is only if this fails that `nova-compute` will fall back to scheduling on a NUMA node that is not associated with the PCI device.” [1] https://review.openstack.org/#/c/361140/24/specs/queens/approved/share-pci-between-numa-nodes.rst |

|

|

7 |

pci passthrough – multi node numa with pci devices in those nodes | A multi numa node guest spwaned with two pci, should have the guest numa nodes along with pci devices passed, associated with host NUMA nodes corresponding to pci devices.(with nics, dpdk0 on host numa node0 and dpdk1 on host numa node1, a multi numa vm would be spawned on them, would have the guest numa node associated with dpck0 pci on node0 and guest numa node associated with dpck1 pci on node1 ) | NUMA / PCI device association feature in qemu/libvirt [1] need to be added to openstack for this to work.

[1] |

- Comment

- Reblog

-

Subscribe

Subscribed

Already have a WordPress.com account? Log in now.